This cookie is used for sharing of links on social media platforms. This cookie allows to collect information on user behaviour and allows sharing function provided by This cookie is used to recognize the visitor upon re-entry. This cookie is set by to enable sharing of links on social media platforms like Facebook and Twitter This cookie is used to preserve users states across page requests. It does not store any personal data.įunctional cookies help to perform certain functionalities like sharing the content of the website on social media platforms, collect feedbacks, and other third-party features. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies.

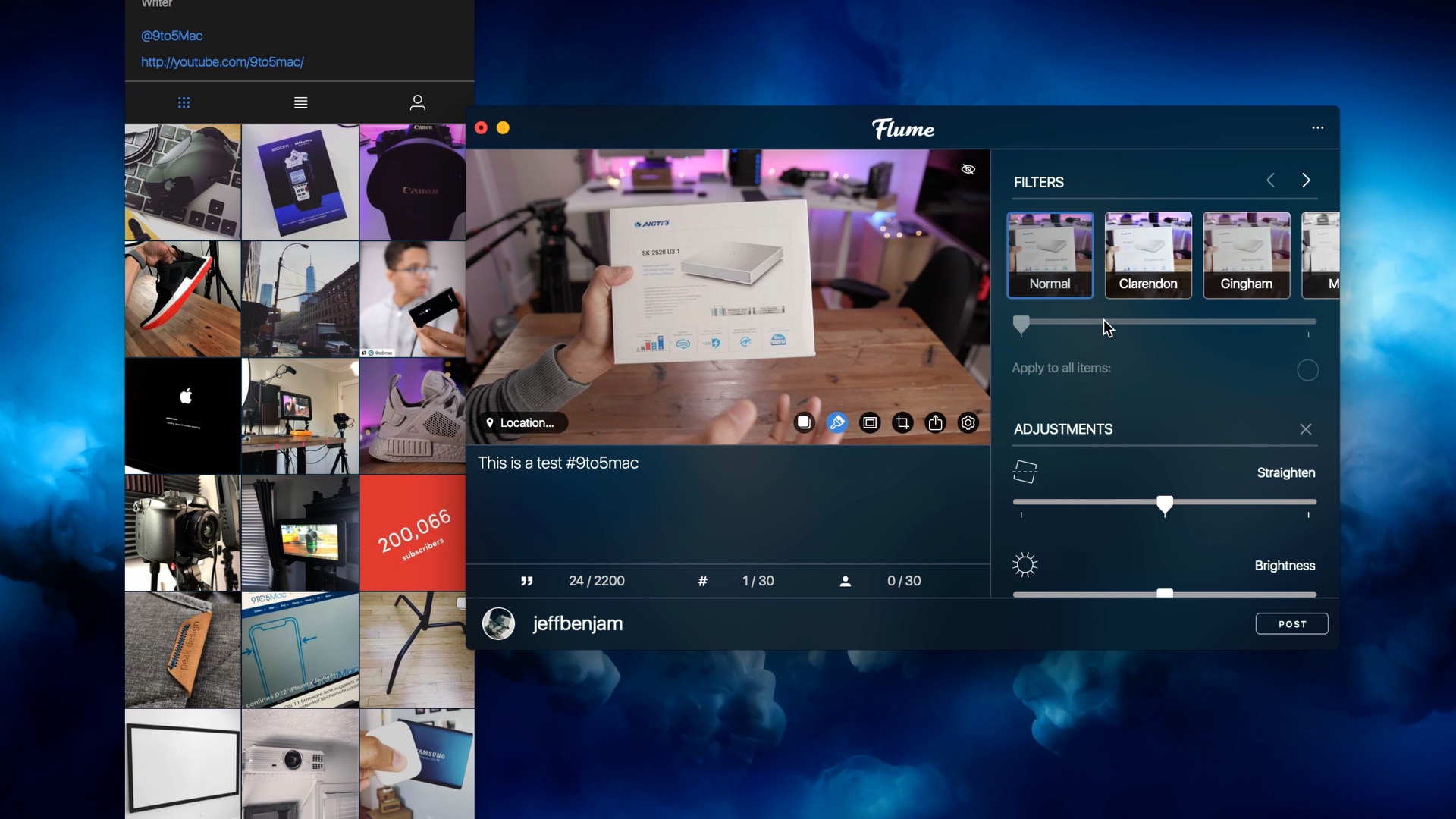

The cookie is used to store the user consent for the cookies in the category "Performance". This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Advertisement". The cookie is used to store the user consent for the cookies in the category "Other. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". The cookie is used to store the user consent for the cookies in the category "Analytics". It does not correspond to any user ID in the web application and does not store any personally identifiable information. The cookie is used by cdn services like CloudFare to identify individual clients behind a shared IP address and apply security settings on a per-client basis. These cookies ensure basic functionalities and security features of the website, anonymously. Necessary cookies are absolutely essential for the website to function properly. That’s all, Data Geeks! Hope my learnings have been helpful!įor easy navigation, here’s a link to the main post that provides a link to all posts in this series Go to HDFS and validate the files being created in HDFS and also view one of the files as follows: Following is the command to start the agent: Now, we start the flume agent to start generating the sequence numbers. # Binding the source and sink to the channel path = hdfs://localhost:9000/apps/flume/SeqGenAgent/ # Naming the components on the current agent To validate the flume setup, I am using a flume configuration that I picked from įollowing is the nf agent configuration file placed under $FLUME_CONF_DIR As we have already installed and configured these, we are good to go.Įxport FLUME_HOME=/usr/local/Cellar/flume/1.6.0/libexec Flume requires java (7+) and hadoop installed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

March 2023

Categories |

RSS Feed

RSS Feed